Research workflows have changed quietly but dramatically. What once meant juggling Google Scholar tabs, messy PDFs, and citation chaos can now be partially automated. The catch is simple: not every AI research tool solves the same problem.

Some tools excel at finding sources fast. Others help interpret dense papers. A few act more like thinking partners than search engines. Treating them as interchangeable is where most users go wrong.

This editorial guide breaks down the most useful AI research tools right now, keeping the focus on real-world usefulness rather than feature hype.

AI can accelerate research, but it does not replace critical reading. Most tools summarize, cluster, or retrieve information. They do not verify truth in the human sense.

Used properly, they remove hours of mechanical work. Used blindly, they can confidently compress nuance or miss context.

The smartest researchers in 2026 treat these tools as assistants, not authorities.

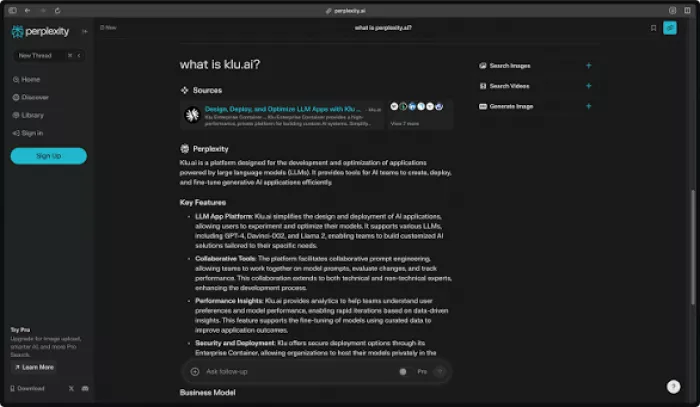

Official website: https://www.perplexity.ai

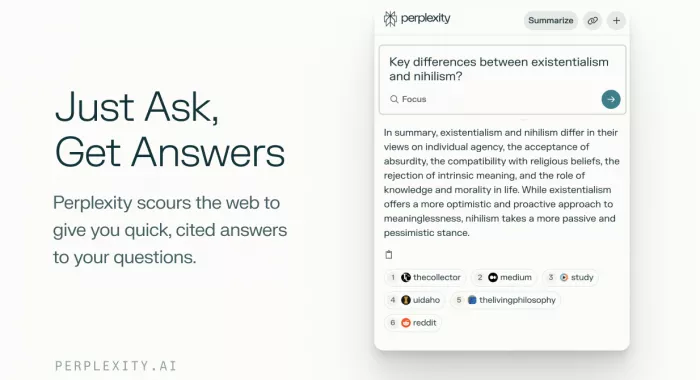

Perplexity functions as an AI-powered answer engine that combines real-time web search with summarized responses. Instead of manually opening multiple tabs, users receive a synthesized overview with inline citations.

The tool is particularly effective during the early discovery phase of research. It helps users quickly understand unfamiliar topics and locate relevant sources without heavy setup.

However, it remains primarily web-focused rather than deeply academic in orientation.

Perplexity shines when speed matters. It is strong for topic familiarization, quick fact validation, and scanning emerging developments.

For deep literature synthesis, however, specialized academic tools usually provide more structured outputs.

Pros

Perplexity delivers fast, citation-backed answers that make early-stage research significantly quicker. Its real-time web retrieval helps surface fresh information, and the interface is simple enough for non-technical users to adopt immediately. For topic familiarization and quick fact verification, it reduces tab overload noticeably.

Cons

It is not built specifically for deep academic workflows, so literature reviews can feel shallow compared to specialist tools. The quality of summaries still depends on source availability, and complex academic nuance can sometimes be compressed too aggressively. Advanced capabilities are also partially gated behind paid tiers.

Official website: https://elicit.com

Elicit is built specifically for academic research workflows. Its primary strength lies in automating parts of the literature review process by extracting structured insights from research papers.

Instead of acting like a general chatbot, it behaves more like a research assistant that surfaces methodologies, summaries, and relevant studies in a structured format.

The platform is particularly useful once research moves beyond surface exploration.

Elicit becomes most valuable during thesis work, systematic reviews, and academic comparison tasks. It helps researchers identify patterns across papers and surface relevant studies quickly.

The trade-off is reduced flexibility outside scholarly use cases.

Pros

Elicit is purpose-built for literature reviews and shines when extracting structured insights from research papers. It is particularly useful for identifying methodologies, comparing studies, and surfacing relevant academic work quickly. For thesis and systematic review workflows, it often saves substantial manual scanning time.

Cons

Its narrow academic focus makes it less flexible for general research tasks. New users may find the interface slightly technical at first, and it is not ideal for fast exploratory browsing outside scholarly domains. The workflow also assumes users already understand research fundamentals.

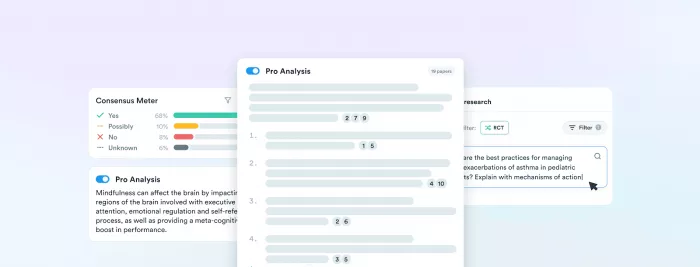

Official website: https://consensus.app

Consensus focuses on answering research questions directly from scientific literature. Instead of broad summaries, it attempts to show what peer-reviewed studies collectively indicate about a specific claim.

This makes it particularly useful for evidence validation rather than open-ended discovery.

It occupies a focused but valuable position in the research stack.

Consensus works well when the question is specific and evidence-driven. It helps researchers quickly determine whether academic literature supports a claim.

It is less suited for managing large research workflows end to end.

Pros

Consensus is strong when the goal is evidence validation. It surfaces study-backed answers quickly and keeps the focus on peer-reviewed research rather than general web noise. For claim checking and research-backed verification, it provides a very clean experience.

Cons

It is less suited for full research workflows or open-ended exploration. The database, while strong, is not as broad as general search engines, and it does not replace a full literature review process. Power users may also find its flexibility somewhat limited.

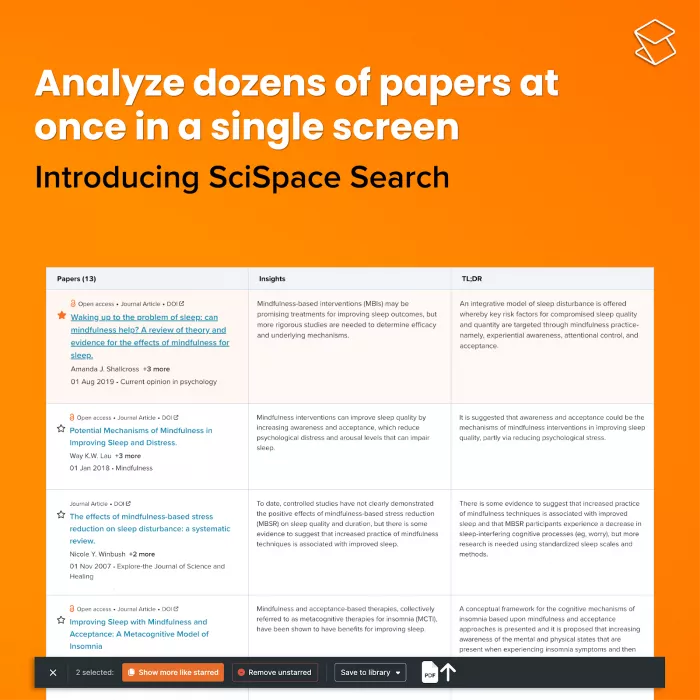

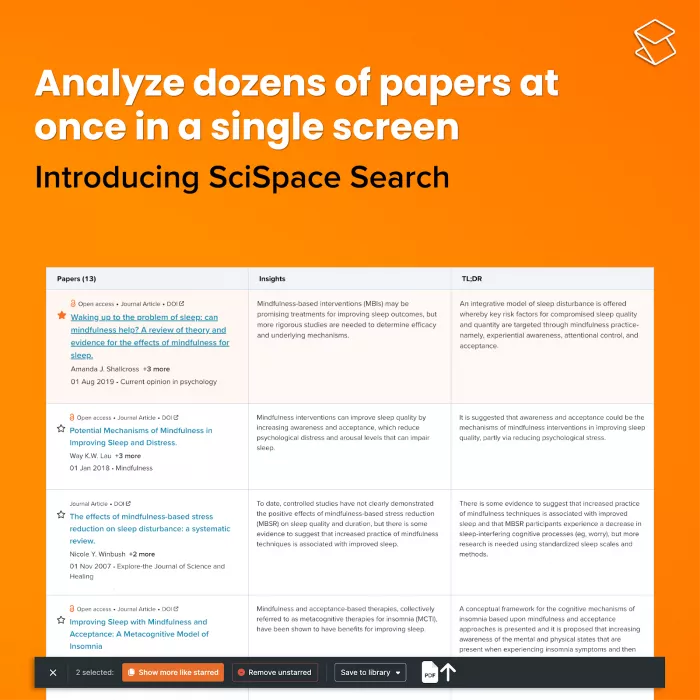

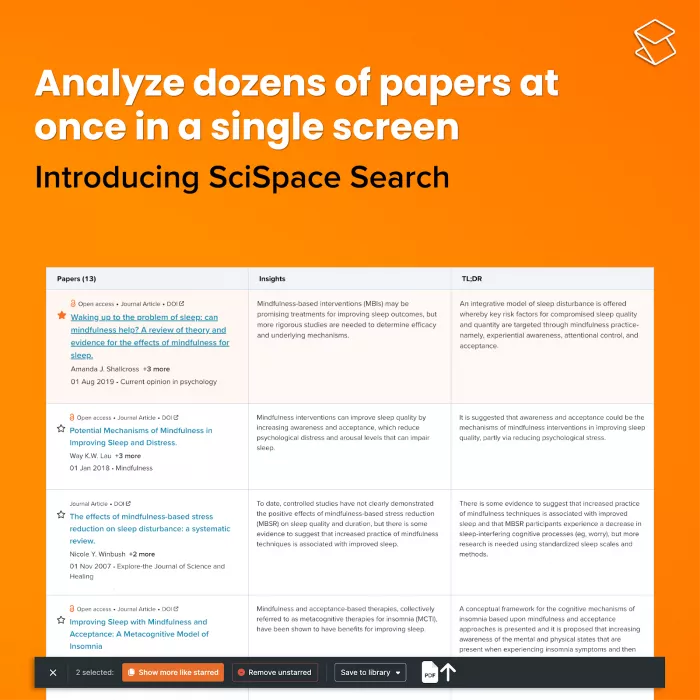

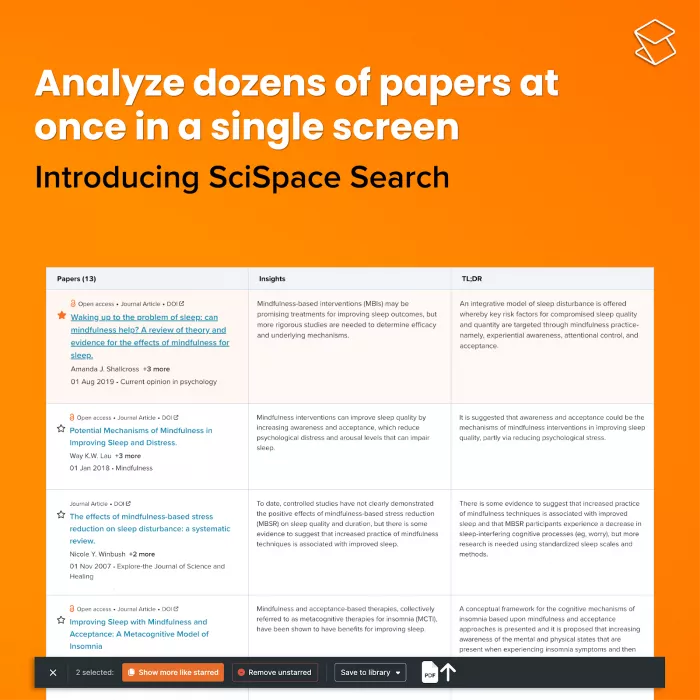

Official website: https://typeset.io

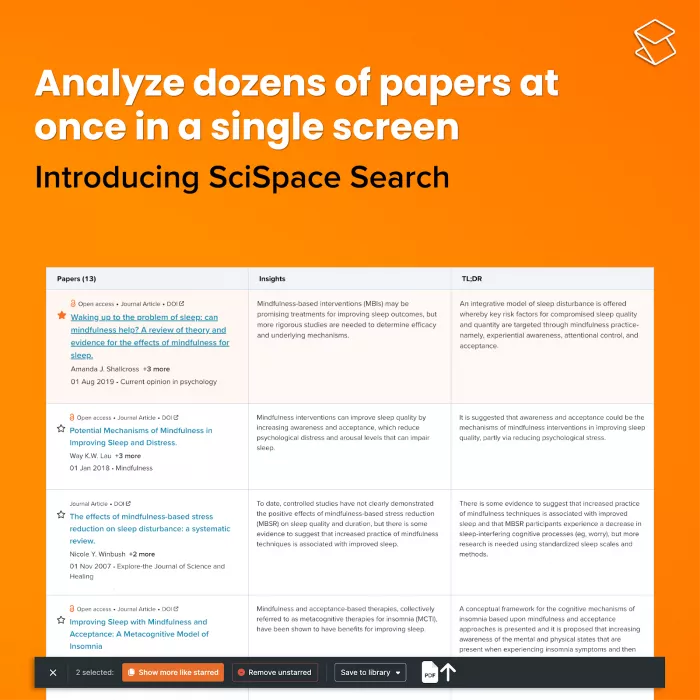

SciSpace targets one of the most persistent research pain points: understanding dense academic papers. Its AI Copilot allows users to upload PDFs and ask questions directly about the document.

The platform focuses more on comprehension than discovery. It helps break down complex terminology and extract key points from research papers.

For heavy reading workflows, this becomes immediately practical.

SciSpace is particularly useful for students, reviewers, and researchers who spend significant time decoding academic PDFs.

It is less powerful as a broad discovery engine compared to Perplexity or Elicit.

Pros

SciSpace excels at helping users understand dense academic papers. The PDF chat feature significantly reduces the friction of decoding complex research language, and it is especially helpful for students and early-stage researchers. For paper comprehension workflows, it often delivers immediate time savings.

Cons

It is weaker on the discovery side of research and depends heavily on uploaded documents. Outside academic contexts, its usefulness drops quickly. Some advanced features are also restricted to paid plans, which may limit heavy users.

Official website: https://chat.openai.com

ChatGPT remains one of the most flexible research companions available. It is less of a search engine and more of a reasoning and synthesis partner.

With proper prompting, it can help structure research plans, summarize complex material, and connect ideas across domains.

Its strength lies in thinking support rather than primary source retrieval.

ChatGPT is most valuable during ideation, outlining, and multi-step reasoning tasks. It helps researchers connect dots across information sources.

However, factual outputs should always be verified with primary sources.

Pros

ChatGPT remains one of the most versatile research companions available. It performs well for synthesis, outlining, brainstorming, and multi-step reasoning across domains. When guided properly, it can connect ideas across sources in ways that single-purpose tools cannot.

Cons

It requires active fact-checking because it is not citation-first by default. Output quality depends heavily on prompt quality, and the model can sometimes sound confident even when uncertainty exists. For strict academic workflows, it works best when paired with evidence-focused tools.

| Tool | Best For | Source Reliability | Workflow Depth | Learning Curve | Ideal User |

|---|---|---|---|---|---|

| Perplexity | Fast web research | High with citations | Medium | Very low | General researchers |

| Elicit | Literature reviews | High | High | Medium | Academic researchers |

| Consensus | Evidence validation | High | Medium | Low | Evidence-focused users |

| SciSpace | Paper comprehension | Medium-high | Medium | Low | Students and reviewers |

| ChatGPT | Research synthesis | Variable | Very high | Medium | Power researchers |

| Research Task | Tool That Typically Wins | Why |

| Quick topic overview | Perplexity | Real-time cited summaries |

| Systematic literature review | Elicit | Structured paper extraction |

| Evidence verification | Consensus | Study-backed answers |

| Understanding dense PDFs | SciSpace | Direct paper chat |

| Cross-domain synthesis | ChatGPT | Flexible reasoning engine |

There is no single best AI tool for research because research itself is not one task. It is a pipeline.

The most effective researchers in 2026 are not choosing one tool. They are building a small, deliberate stack and using each where it actually performs best.

AI can remove hours of mechanical work. Just do not outsource your judgment.

Discussion