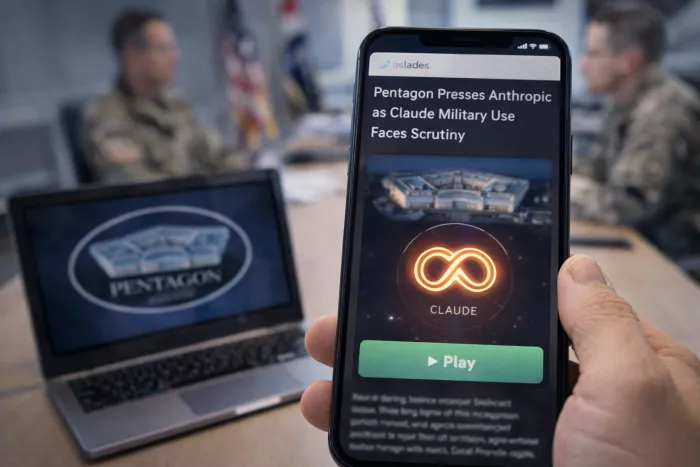

U.S. Defense Secretary Pete Hegseth has summoned Anthropic chief executive Dario Amodei to the Pentagon for a high stakes meeting over whether the military can continue using the company’s Claude models. The talks highlight a growing dispute over AI guardrails in defense and surveillance applications.

According to reports from Axios and Reuters, the meeting is expected to focus on contract deadlock between the Defense Department and Anthropic. The Pentagon has been using Claude in classified decision support pilots but negotiations have stalled over the company’s insistence on strict usage limits.

Officials familiar with the discussions have indicated the session is not routine. One Pentagon official described it as critical to determining whether Anthropic remains part of the department’s AI stack. The Defense Department is reportedly prepared to reconsider future work if the company does not ease certain constraints.

Anthropic is currently the only major frontier model developer that has declined to directly integrate into a new internal U.S. military AI network, even as competitors such as OpenAI, Google, and xAI have moved forward.

Anthropic has publicly outlined areas where it does not want its models deployed. The company has opposed use in fully autonomous weapons systems that can select and engage targets without human control. It has also resisted involvement in domestic mass surveillance systems that could track or suppress dissent in democratic countries.

Amodei has repeatedly warned about risks from poorly governed frontier AI. In a January essay, he argued that the world is considerably closer to serious danger than it was in 2023, while still advocating for enforceable limits rather than broad bans.

The company has generally aligned with prior U.S. safety initiatives, including voluntary national security testing and support for export controls and model evaluation standards. Its current position attempts to draw a boundary between acceptable decision support roles and prohibited weapons or dragnet surveillance uses.

Since taking office, Hegseth has framed his AI agenda around removing what he calls ideological barriers within military technology. In a January speech at SpaceX’s Texas facility, he said the Pentagon would avoid AI systems that cannot support warfighting needs and emphasized the importance of tools that operate without constraints on lawful military applications.

The defense secretary has publicly praised alternative AI partners, including xAI and Google, as examples of companies willing to align with the Pentagon’s direction. Anthropic has been notably absent from those endorsements.

This framing has intensified the standoff, with safety driven limitations now positioned as a point of strategic disagreement.

The dispute reportedly escalated following a January U.S. operation that captured Venezuelan President Nicolás Maduro. Bloomberg and Axios reported that Claude was used in some capacity during the mission, after which Anthropic’s team raised questions about how the model had been deployed and audited.

Pentagon officials interpreted those inquiries as a potential sign the company might retreat from operational support. The episode reportedly triggered internal discussions about replacing Anthropic with other providers despite Claude’s strong technical performance in earlier evaluations.

The upcoming meeting is widely viewed as an attempt either to establish a new framework for acceptable use or to prepare for a possible split.

Defense analysts note that artificial intelligence is already embedded across military workflows, including logistics planning, satellite image analysis, and operational simulations. The current debate centers less on adoption and more on governance.

Key unresolved questions include who sets binding limits on autonomous lethal force, what level of human oversight is required in targeting decisions, and how surveillance capabilities should be constrained in democratic contexts.

Some experts warn that excluding safety focused vendors could have unintended consequences. If more cautious developers withdraw, less restrictive providers may fill the gap, potentially increasing long term risk.

The Hegseth Amodei meeting is emerging as a test case for how far AI developers can enforce safety guardrails while still participating in national security work. The outcome may influence not only Anthropic’s future role with the Pentagon but also the broader balance between military demand and AI governance in the years ahead.

Discussion